My mostly arbitrary picks of the best (part 2)

Lightbikes, Trash Can games and Mosquito Tracking were some of the submissions Microsoft received which I looked over in part 1.

Below is even more picks from the weird and wonderful pile of public proposals:

-

https://microsoftstudios.com/hololens/shareyouridea/idea/life-paint/

Vicmell6698dull ideas

Translation

https://microsoftstudios.com/hololens/shareyouridea/idea/hololens-translator/

Translation is always a popular use-case for AR systems.

It’s another case of user empowerment. Expanding our human abilities though the use of wearable technology.Normally the idea is focused around replacing text in users the field of view. To let them glance at any foreign bit of text and, like magic, see it in their own language. We see this in systems such as Word Lens.

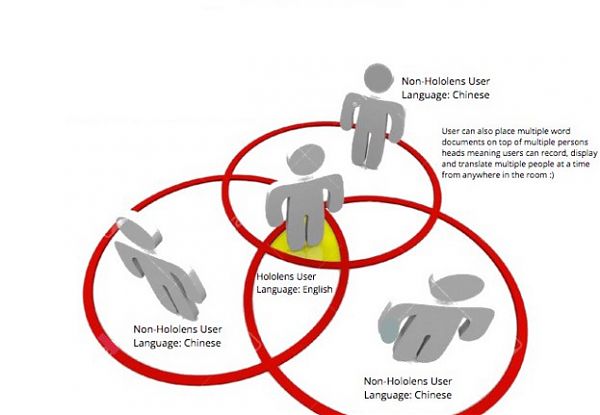

The idea here, however, is audio based. To not only do on the fly translation of spoken words, but to then position the resulting translated speech above peoples heads.

Skybit OrbitalHolo Drums

https://microsoftstudios.com/hololens/shareyouridea/idea/holo-drums/While the above may require advanced neural network solutions for speech recognition, translation and synthesis, not all Hololens applications need to be so complex.

ToedPaladin9 proposes a virtual drum set. Track some sticks, trigger some sounds.

Peddles might be a bit trickier, but at a basic level wiping up an app like this should be simple, and allow a huge range of customization for the end user.

One additional idea I would add would be the option to hock it up to virtual spotlighting – synced to the beat of the users drumming.

https://microsoftstudios.com/hololens/shareyouridea/idea/escape-the-situation/AR-Enhanced Tabletop RPGs

https://microsoftstudios.com/hololens/shareyouridea/idea/ar-tabletop-support-for-roleplating-games/TheDarkElf007 along with a few others, suggested the use of AR applications to both enhance and streamline this process. Things like line of sight can be visually presented – and thus quicker to understand. Lesser known rules and effects can be calculate in real time – instead of needing to be looked up. Context-specific information could also be displayed to each player individually – letting them see the information they need easily, without any other player knowing it.

There is almost endless scope to the possibility’s of such applications given the diverse range of scenarios and play styles such games encompass. AR apps should be able to enhance the games for longtime players, as well as streamlining the whole process for new players down to what’s important: telling the player if their pointy thing is pointy enough.

The Layered Earth

https://microsoftstudios.com/hololens/shareyouridea/idea/layered-earth/The idea of conjuring up a 3d map or globe and out of thin air and manipulating it is not a very new idea. It was depicted in sci-fi films and novels long before we had the technology to realize it.

3D Painting

https://microsoftstudios.com/hololens/shareyouridea/idea/3d-painting-4/Proposes extending the traditional painting application into three dimensions. Effectively making the artwork volumetric.

I like this idea a lot, as it’s something pretty much only possible with a device able to both input and output in full 3d. How else can brushwork by done in three dimensions, without constantly stopping to manipulating the z depth? With Hololens, there could be fluid three dimensional strokes, no extra control’s needed.

Only issue I see might be such a app would be very computationally intensive, with large ram demands.

The potential for high res voxel painting is very interesting though – it could almost be considered a new form of art, something that exists halfway between painting and sculpture.

For more concepts see:

https://microsoftstudios.com/hololens/shareyouridea/ideas/

Author thomaswrobel

Categories Software, Hololens

Hololens Concepts: My mostly arbitrary picks of the best so far.

Posted

Comments

None

My mostly arbitrary picks of the best so far.

Microsoft should make a Hololens for dogs.

At least, that’s one of the many ideas Microsoft has received since taking public submissions for Hololens applications. While submitting some of my own, more modest, proposals, I took the time to do some of my own digging.

What I found was a eclectic pile of brain dumps from a wide range of people. From the trivial, to trying to save the world. Some unrealistic in scale, some humorously falling outside the scope of what the Hololens could reasonably be expected to do (ie, it can’t smell things – at least, not unless the Dog will come fitted as standard).

Other submissions I came across seemed suspiciously like auto-generated spam, or at least it’s hard to tell even their vaguest intention.

Out of the ideas I did understand, I made a selection of ones I found the most interesting, plausible

- or that just appealed to me on some fundamental level.

Book / DVD / Stuff finder

https://microsoftstudios.com/hololens/shareyouridea/idea/library-book-finder/

https://microsoftstudios.com/hololens/shareyouridea/idea/stuff-locatorA few different people have suggesting applications to help find stuff about the house.

With the Hololens mapping the environment, combined with now fairly common OCR techniques, finding book or dvd titles should be well within the possibilities of a app. Provided the object hasn’t moved since last time the Hololens application saw it, it should be simple to provide a onscreen indication of where it is.

Going further, it might be eventually possible to locate objects not defined by recognizable text, presumably using neural network based object recognition. Googles recently open sourced Tensorflow could help developers here.The effectiveness of this sort of “find me†application would depend very much on slickness; can the end-user specify they want to find their keys, quicker then they can spot it was where they left them?

Trash can fun and games

https://microsoftstudios.com/hololens/shareyouridea/idea/trash-can-fun-and-games/FamilyGuyJJ80 suggests making a trashcan erupt in effects, or keep a score, whenever rubbish is chucked into it.

It’s a very simple concept that really demonstrates that how any arbitrary thing in life can be gamified or skinned. It might have no practical purpose, and only moderate amusement, but this shows somewhat the “causal possibilities†opened up when your world is half virtual.

Light Bikes;

https://microsoftstudios.com/hololens/shareyouridea/idea/holosnake-tron-light-cycles/Given that the Hololens knows where the user is in relation to their environment, it would be fairly easy to plot a path of their recent movements. And what if their trail was a wall streaming out from behind them – fatal to all who touch it?

That’s the idea Surfsalot has, by recreating the classic Lightbikes scene from the film Tron. While using Hololens on bicycles might be just a little risky, Surfsalot points out it could also be played with people just running around – or even in Wheelchairs. It might even be the start of a new sport – AR tech making a previously impossible game now real. Given Tron was about people being pulled into a digital world, it’s somewhat fitting one of its concepts can be made real by the reverse.

Realistically, this concept would be better pulled off by an organisation renting out a large disused space, and providing the equipment.And you never know, maybe using bicycles might be possible to do safely after all, if someone else’s idea takes off.

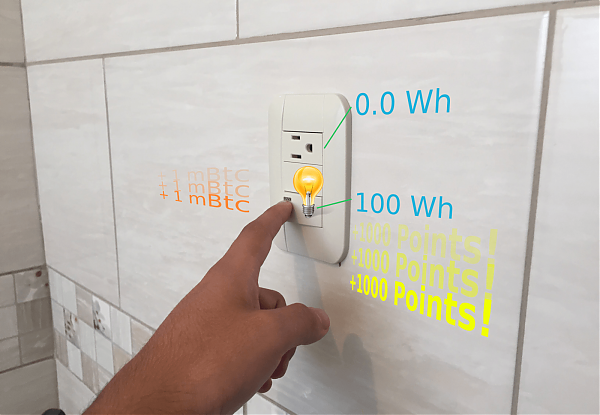

Energy Saving: The Game;

https://microsoftstudios.com/hololens/shareyouridea/idea/energy-saving-the-game/Gamification is a powerful tool to incentivize people to do previously boring tasks. In some ways it’s a hack of the human brain. We like points and scores and, indeed, little bells that tell us we are doing a good job. Turning energy saving into a game is one case where this human tendency can be exploited for good.

The question is, however, would the gains here offset the energy use of the Hololens itself during the period using the application?

Mosquito Detector

https://microsoftstudios.com/hololens/shareyouridea/idea/mosquito-detector/While the degree of precision might be beyond the first generation of Hololens tech, I still love the idea of such advanced tech being used for such a trivial life problem. It’s like the whole of human civilization has been building up to giving us all the ability to squat mosquitoes out of the air.

Wizard duel

https://microsoftstudios.com/hololens/shareyouridea/idea/harry-potter-duel-simulator/Milanvan Londen0 imagines wizard duels being conducted with the Hololens.

The beauty of a wizard duel, as depicted in Harry Potter as well as many classic movies and novels is the participants mostly stay put in one place, with no physical contact.

As a concept it’s somewhat idea for local multiplayer in restricted spaces – making it a not too dangerous proposition for parents.

Players could use gestures to cast spells and counter spells. Any stick like object could be skinned into a wand.

Some popular depictions of wizard duels involve picking the right animal to counter the opposition’s – so there could even be a light educational aspect as to predator/prey relationships in the animal kingdom. That’s how it could be sold to parents anyway.Project Skipper (AR pets)

https://microsoftstudios.com/hololens/shareyouridea/idea/project-skipper-augmented-reality-pets/An idea simultaneously invented by a few people, but it bears repeating: Virtual pets that react and “exist†in peoples living rooms are sure to be popular. This idea has perhaps been best depicted by anime series Dennou Coil, where an augmented reality virtual pet was a important part of the series.

Hour Hopper describes this idea well, and also makes a appeal for it to bear the name Skipper in some way – as a tribute to his deceased dog.

Ping pong;

https://microsoftstudios.com/hololens/shareyouridea/idea/holographic-ping-pong

And why stop at Ping-pong? Pool, Snooker and even Air-hockey would be equally doable with just a Hololens and a empty desk.

For more concepts see;

https://microsoftstudios.com/hololens/shareyouridea/ideas/

Author thomaswrobel

Categories Software, Hololens

Mirrors in a half virtual world

Posted

Comments

None

Put simply, when you are wearing a camera on your head, all mirrors you look at also become cameras.

The consequence of this is a simple pocket mirror can be used as a communication device, allowing skype-like communications anywhere.

Because mirrors reflect the full lightfield, any HMD using optical based systems for 3d measurements can also exploit the mirror to record you in full 3d. This opens up possibilities like replacing a background by using a simple depth test to create a mask.

In short, if AR is a way to have everything everywhere, then mirrors become a cheap, simple, way to talk to anyone anywhere…..while also looking like anything you’d like.

Author thomaswrobel

Categories Software, Augmented Reality

Designing For a Half Virtual World

Posted

Comments

None

Designing For a Half Virtual World:

Part 1 – FOV

When developing for any new medium like this, it’s important to take into account not just the new possibilities but also any design constraints you need to work within.

One constraint of the Hololens that has prompted a lot of discussion in the press is its field of view.

As humans we have a very wide FOV, about 180 degrees, but optically transparent AR devices like the Hololens are currently more limited. While Microsoft has not published a official spec sheet for the Hololens, it’s estimated by some users to be about 30 degrees.

Some people have used this value to write off the Hololens completely – a point I firmly disagree with.Some hands on experiences back me up.

But the FOV can’t be ignored completely either.

As a developer it is important to consider the FOV the user has, where their eyes will be, and to try to immerse them in a world that limits how much attention is drawn to the edges of the controllable portion of that view.

Because of this I have written a little guide to what I feel will be useful things to consider in regards to the visible space when developing a Hololens app.

Draw the eye – consider where the eye is at all times.

A lot of our eye-movement is subconscious. Our eyes dart about while taking in the environment, often being automatically drawn to anything out of the ordinary.

To a large extent this fact can be used to precisely control where the users gaze will be, even if it’s only for a brief momentFor example: let’s say your designing a game and, as in Microsoft’s own demo, a monster is going to go crashing though a real life wall. If this is done suddenly without any notice the player could be looking at the wall off-center…or even in completely the wrong direction.

Instead, by having a bashing sound come from the wall, then letting a crack appear in the center of where it will break you control the users eye. The noise of the bash draws the users attention to the general direction, then the crack draws their gaze to exactly where you want it.

Notice the crack that appears first?Ideally, you then break the wall at the moment the users eyes our looking directly at the crack (which of course you can detect, as HMDs like the Hololens need to know where people are looking)

Because the users gaze is now centralized as the creature emerges, you have ensured they see the maximum possible amount of the creature. If the creature is smaller then the FOV, they will even see it completely without any clipping that might spoil the illusion that it’s really in the room with you.

This technique can be applied to general interface elements too. If it’s important for a user to see a certain bit of information, a brief indication of where it will appear before it appears gives the user the fraction of a second needed to move their gaze towards it.

Remember also not to move things quicker then the users ability to track them – the user will naturally keep interesting scene elements centered in their view as long as they don’t whizz off too quick.

Consider dynamic sizing for GUI elements.

While it will not always be possible or appropriate, it is worth considering if scene elements can be sized to make them more likely to keep within the frame. GUI elements are a good candidate for this.

For example, consider a marker on the ground;

This doesn’t look too good when close up.

However, if you keep the marker scaled to display-space rather then world-space:

It still works well as a marker, without cluttering the field of view, or being clipped out of its edges.Completely 2D Labels meanwhile, can just be aligned to keep within the FOV.

Ensure the user can locate stuff

On a more practical level in some situations it might be important for the user to be able to quickly locate virtual elements outside their immediate view.

As humans our wide FOV helps us detect movement or changes in the environment from a wide range of angles. The virtual overlay in current HMDs, however, is just focused on the area in front of the user.

For this reason additional cues should be provided to help locate elements outside the Field of View.The most obvious possibility here is sound. Fortunately the Hololens has good 3d spacial sound, letting users find elements by ear alone. We can hope the same will be said for future AR HMDs.

In addition to sound, carefully made targeting or “radar like” visual indicators could be used.

It might take some experimentation in order to achieve a good balance between minimal screen use and intuitive indication of where something is.One way to do this would be a depth aware target, with prongs indicating the length and distance to the object:

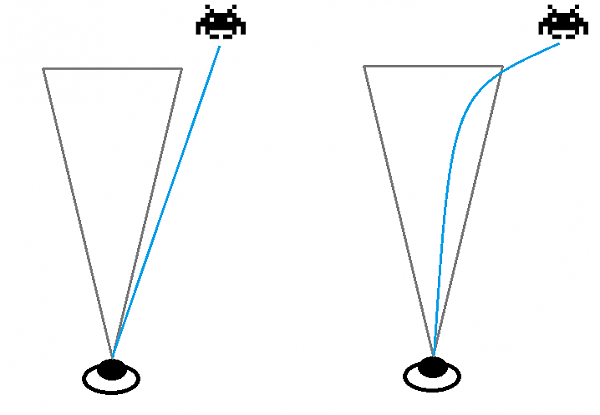

(crude mock up)Consider the path of motion.

If something is heading towards the user, it might work better to use a curved path towards the head, rather the a linear one:

This would help ensure the user sees it earlier, and it spends less time at the clipped edge of the users line of sight.

Finally… Don’t worry!

Remember: content is king – if your application is immersive and offers a gripping experience the user will be paying less attention to things that don’t quite fit.

These techniques are just to help ease the transitional period when the user is getting used to the experience. To help ensure things aren’t obviously too out of place. Once the user is hocked on your experience anyway, it largely doesn’t matter. As humans we quickly adapt and get used to new experiences – and as developers we should have fun making them!

Author thomaswrobel

Categories Hololens, Software

Low tech AR business cards

Posted

Comments

None

Author thomaswrobel

Categories Augmented Reality