One day we will live in a half-virtual world.

I have long thought this. The idea slowly crept up on me rather then it being a revelation, but I have been thinking of AR tech in one way or another since my childhood.

To be precise, it was the moment I found out LCD screens could have a transparent back. This filled me with awe – the idea we could have something transparent, yet still turn part of it black amazed me.

I wish I kept some of the doodles I drew back then. I did a crude sketch of two “game boy” LCD displays in front of a pair of glasses with the idea being you could “draw in the air” using a control pad.

Of course, it wouldn’t have worked. I didn’t consider the focusing problems – you’d just get a smudge.

But it was always in my mind – the idea of sketching and drawing in the air around us.

The Difficulties.

I studied 3d Computer Animation later. One of the things I enjoyed doing most was composting virtual objects into real film footage.

Although my university only gave it a cursory glance, none-the less it was quickly apparent just how tricky it was to do:

- You had to deal with perspective matching, ensuring the virtual camera and the real camera are synced as closely as possible.

- You had to deal with occlusions. Ensuring virtual objects pass behind real objects. This typically means creating invisible virtual objects the same shape as the real ones and using them as a mask.

- Possibly the easiest, you also need to deal with the direct light. So you need to position virtual lights in about the same place as real lights so the correct parts of virtual objects are lit.

- You had to deal with shadows – virtual objects should cast shadows on real objects and visa-versa. If you’re lucky, you can reuse some of the mask objects above.

- You have to at least approximately deal with reflections. Even if there’s no outright mirror, many materials are slightly reflective and should have some sort of emulation of what they would be reflecting. Again, this might be a virtual object needing a reflection in a real one… or a real object reflected in a virtual.

- Somewhat related to the above, the lighting and colouring of the scene should match. This ideally means radiosity simulation – making the virtual objects gain the “expected reflected light” from objects around them.

So with all these things, even with unlimited computing power and non-realtime rendering there are six tricky things to take into account…oh wait…I forgot focus. Make that 7.

Convincingly mixing the real and the virtual is hard.wait .. motion blur…8.

So how close have we come to pulling any of this off in real time?

Prehistory

To cover the complete history of AR developments is beyond the scope of this little ramble. Far too many researchers are worthy of credit for their groundwork, writers for their inspiration, and pioneer coders for their early implementations.

I will however cover what I consider some recent significant commercial hardware products that have been stepping stones to ubiquitous AR computing.

The Gizmondo

My first awareness of a commercially available AR device was the Gizmondo handheld gaming system in 2005.

By today’s standards it was very underpowered. But it had a built in camera, significantly more power than other handheld devices at the time, and was closer to PC architecture in terms of chipsets making it fairly attractive to develop for.

In terms of AR there were a few demos of overlaying images from the camera with very rough tracking. Interestingly, the system lacked any accelerometers, meaning any information on its movement had to be deduced purely from its camera.I was always a gamer at heart, so this seemed like an attractive buy for me.I thought “I’ll get one when there’s the first interesting app for me”.

That never came.

In fact, the Gizmondo flopped. It was a tough market to break into, and there’s numerous reasons why it failed…..but perhaps it was mostly because one of the founders ( Mikael Ljungman) was convicted of fraud and its executive, Stefan Eriksson, was the leader of a Swedish Mafia group. (https://en.wikipedia.org/wiki/Stefan_Eriksson)

Farri crashes, missing people, yachts lost at sea….the extent of the criminal web is really quite amazing.

But not a good start to this list really.

The bright side is Gizmondos lived on as cheap devices for AR research.0Some examples;

http://www.andreworlando.com/?p=40

http://handheldar.icg.tugraz.at/caleb_gizmondo.php

About 25,000 Gizmondos were sold.

Android phones

I got my first smartphone quite late – 2010. But I was coding on it the same day. AR excited me and I was keen to try implementing some of the ideas from my ‘Everything Everywhere’ paper I wrote back in 2009. My phone was a HTC Legend and pretty easy to set up a AR overlay on the camera view.. However, like many phones even today the compass was pretty imprecise making accurate POIs near impossible to do.Still, I coded up my first AR app ‘Skywritter’ – A open communication protocol allowing people to selectively share geolocated annotations or 3d models with their friends. Unfortunately I built this on the WFP protocol as it had a lot of nice properties for this use-case2Specifically; Selective sharing,realtime,federated,completely open – in short, anyone could make a client, anyone could make a server, anyone could host data and they could all talk to eachother.Sadly the death of Google Wave and the transfer to Apache broke the needed client server protocol and slowed development to a crawl.3Unpaid volunteers continue to work on the protocol under Apache but its been 3 years now and no sign yet of the needed client server protocol standard. In fact, their client and server are still tied together significantly in the code base slowing possible developments for clients. But I am getting side tracked here…Contrary to my own experience above, it’s tempting to list the iPhone as that was many people’s first experience with smartphones. However, for the first year or two of the iPhones life the Camera/Video API was not available to developers – meaning the first AR apps showed up on Android phones.

I got my first smartphone quite late – 2010. But I was coding on it the same day. AR excited me and I was keen to try implementing some of the ideas from my ‘Everything Everywhere’ paper I wrote back in 2009. My phone was a HTC Legend and pretty easy to set up a AR overlay on the camera view.. However, like many phones even today the compass was pretty imprecise making accurate POIs near impossible to do.Still, I coded up my first AR app ‘Skywritter’ – A open communication protocol allowing people to selectively share geolocated annotations or 3d models with their friends. Unfortunately I built this on the WFP protocol as it had a lot of nice properties for this use-case2Specifically; Selective sharing,realtime,federated,completely open – in short, anyone could make a client, anyone could make a server, anyone could host data and they could all talk to eachother.Sadly the death of Google Wave and the transfer to Apache broke the needed client server protocol and slowed development to a crawl.3Unpaid volunteers continue to work on the protocol under Apache but its been 3 years now and no sign yet of the needed client server protocol standard. In fact, their client and server are still tied together significantly in the code base slowing possible developments for clients. But I am getting side tracked here…Contrary to my own experience above, it’s tempting to list the iPhone as that was many people’s first experience with smartphones. However, for the first year or two of the iPhones life the Camera/Video API was not available to developers – meaning the first AR apps showed up on Android phones.

I would like to think the popularity and potential of those first apps encourage Apple to speed up the time frame of their own camera support.Needless to say, all smartphone and tablets now have numerous AR apps and games. How far they have come to the ideal AR experience varies. Most these days have rough positioning and perspective matching. We are starting to see some apps go further however, with markerless tracking and even occlusions being dealt with.

While it’s debatable how useful some, or even most of these applications are, its clear they have played a big role in acclimatizing people to the idea of mixing the real and the virtual as well as being an importing testing ground for ideas.

With 1/7th the worlds population owning a smartphone, its safe to say AR became mainstream at this point – and possibly prompted many to google the term:

The Nintendo 3DS

Game systems had been playing with cameras for awhile. The original gameboy had a camera with some very simple mini games. The Playstation had its ‘eye’ which first introduced gesture controls for many people.

However, it wasn’t till Nintendo released the 3DS system in 2011 that the original promise of the Gizmondo had been fulfilled. The system shipped with a bunch of AR games built in for all users, and many more as paid downloadables. Needless to say, I bought one straight away.

On loading the system for the first time I was particularly delighted to see

‘AR Games’ was predominantly displayed on the menu. Not hidden away, but proudly shown instead. AR was clearly mainstream – at least for gamers.5The previous Nintendo handheld,has sold 154million units, effectively making it joint-best selling videogame system of all time. In terms of videogames, it’s as mainstream as possible

The system also came with various marker cards for tracking purposes. While the auto-stereoscopic screen might have been the feature Nintendo was pushing in commercials – it also was clear now that the company that owns Pokemon saw potential in AR.

The 3DS has so far introduced about 50 million people to AR.

2014

2014 stood out as a highly significant year for AR. We had plenty of HMDs announced, some released, investments made. We saw increased use of it in the media, and some significant practical demos of related tech.

It also became very clear, if it wasn’t already, that optically transparent AR HMDs ‘won’ with every major player focusing on it.Software developments:

Interoperability format

Metaio, Layar and Wikitude banded together to define a shared standard for augmented data. This would allow developers to make and update only a single augmented layer and yet have it viewable on quite a few different AR apps.

This was, I felt, a massive step forward. I had previously been at W3C meetings to discuss AR web standards, and while lots of positive ideas were put forward it was clear we needed individual browser makers to step up and implement a ‘defacto’ standard in order for progress to be made.I quite firmly believe that browser makers should not compete on content. Everyone benefits from content being accessible to all users. Instead, just like with the flat web, browser makes should compete on features, stability and interface.

-

Layar gets bought out by Blipper

While no doubt a boost to Layar, it meant the standard above is likely abandoned.

Needless to say, I was pretty upset with this development. Layar is a big player with a huge userbase. At this point any hopes for even a defacto standard needs their co-operation. -

Project Tango

Project Tango is a positioning and short range area mapping system developed by Google, using depth sensors to build up a point cloud as the device moves about. It seems inconceivable that Google hasn’t developed this with its own AR applications in mind. Sure, mapping itself is a big thing for Google, but why would they be building it into consumer devices if it was just for their streetview walkabouts?

It’s also very likely that Microsoft is using similar tech for their HoloLens positioning.

Mapping the local environment is a huge step towards creating the ideal AR experience. Aside from more robust positioning, it makes dealing with static occlusions and shadow casting trivial. If the point cloud your are generating has colour data (as Tango does), you could even use it for very rough reflection maps and radiosity simulating.Devices with Tango built in are supposed to be coming out over 2015, almost certainly for AR use.

There is a detailed breakdown of one Tango prototype here

https://www.ifixit.com/Teardown/Project+Tango+Teardown/23835 MagicLeap

Still not much known about this groups project. A lot of hyperbolic language and talk of what they are not7“will not be a clunky headset” “not a screen”, and strong hints that they are working on reproducing the full lightfield of a virtual object.

Reproducing a lightfield is no trivial thing. Most screens output the same colour in all directions from each pixel.

Autosteroscopic screens can selectively output different colours in two directions typically used to produce a 3d effect, either using a lenticular lens8[Remember those children’s collectable 3d cards? Often in cereal packets? You could feel the ridges of the lens by rubbing your finger over it]or parallax barrier9like Nintendos 3DS uses.

To reproduce a full light field means to selectively send different colours of light out at all

the different angles from each pixel.

So the image on a ‘lightfield screen’ would change based on the angle you look at it. – in fact, it would be exactly like looking through a window to a real scene on the other side. No matter how your head moves, the other side looks perfectly real and at the correct perspective.

The closest tech I can think of to this is the MIT technique of using two layers of display with one of them partly acting as a parallax barrier to the other. So rather than just virtual slits, a dynamically changing image on the front layer helps select the angle of the light allowed through from the back:

http://web.media.mit.edu/~mhirsch/hr3d/But its hard to see how this sort of technique could ever work in a HMD. Maybe MagicLeap has found a way to do the equivalent with projectors?

In either case with a massive $540 million investment from Google is likely they showed something of interest.

Hardware Developments

Metaglass / SpaceGlass

An impressive attempt by a small group to ‘do it all’. Using a HMD by

Epson, together with gesture sensors and bespoke software they are almost certainly the closest in terms of actually buyable hardware to the dream of AR.

The teams chief scientist is Steve Mann – almost certainly the most experienced HMD user in the world as well as a huge contributor to the field of computer vision.

http://en.wikipedia.org/wiki/Steve_Mann

Pictured is their ‘Meta1’ developer device on the left and their more expensive ‘Pro’ version on the right. Both of these have been demoed working and there’s hands on impressions online.

One of the early apps they demoed showed sculpting the profile of a vase in mid air with hand gestures, the final result being output to a 3d printer.

Currently the devices are tethered to a computer,but they plan to release a separate module to allow it to be used out and about – making it in principle a very flexible device indeed.

Meta recently raised $23 million from investors. Bargain.

Telepathy

To be honest, this ones been something of a disappointment. Initially shown to be a hyper slick direct competitor to Google glass;

It slowly evolved to the version “Telepathy Jumper”, which will be released March 2015;

While I still hope it can make a last minute comeback, if Google aren’t confident enough to release Glass as it stands to the wide public, I am not sure how this design is supposed to be a success. It’s software is apparently focused around remote skill/knowledge sharing which is certainly a good use-case, but it will need some powerful demos rather than just a compelling vision to win over the public now.

Epson

The Moverio Bt200. Designed more for professional use then any consumer it nonetheless showed quite some potential for specific fields. Its also used as the base for a few other products.

http://www.epson.com/cgi-bin/Store/jsp/Landing/moverio-bt-200-smart-glasses.do

ORA Smart Glass

A single screen smartglass that has the unique design that it can pivot its display between being in the center of your vision or at the bottom.

The glasses also feature photochromic lens that darken in sunlight – potentially very useful to keep the display reasonably visible.

https://www.kickstarter.com/projects/optinvent-ora1/ora-1-smart-glasses-developer-versionVuzix

Vuzix has long been making HMDs, video goggles for the public and AR HMDs for military use. For the last few years they have been working on AR goggles for the consumer market and recently got a $24.8 million investment from Intel. Meanwhile Nokia inked a deal with Vuzix to bring maps to smart glasses.

In short, Vuzix was there before it was cool and is clearly a very important company to watch.CastAR

The most unique and thus the one I find most interesting. Created by ex-Valve electronics wizz Jeri Ellsworth, it uses special reflective material and a head mounted projector to create the illusion of 3d objects at roughly the correct depth of focus. Interestingly the material only reflects light back in the exact direction it came in at – allowing many different devices to see different images on the same surface at once. It also means ambient light is far less of an issue, as light from other directions isn’t seen.

While the restriction of needing a special surface rules out many AR uses – it also lets this device be lighter, cheaper, less eye strain and a wide field of view.

Cast AR can be ordered now for $350ish together with surface and a interesting looking controller: https://technical-illusions.myshopify.com

http://www.geeky-gadgets.com/castar-versatile-ar-and-virtual-reality-system-start-shipping-24-11-2014/Sony SmartGlasses

Sony has been showing of Smartglass prototypes and concepts for a few years, one they showed in 2014 is a monocle clip on design. Recently they announced a more traditional sunglass like design with similar functions to Google Glass.

Previous versions were designed to give extra information while watching tv-shows. It’s unknown what their final target uses will be, but it’s safe to say Sony will be at least considering uses with its highly profitable games division – who already has experience with gesture based gaming through the Eyetoy.Lumus DK40

Lumus seems to be concentrating on delivering the optical components from other HMD makers. Despite this, they have showed off prototypes which combined their technology with technology from eyeSight, resulting in a purely gesture controlled HMD which reportedly functions quite well. http://www.engadget.com/2014/02/25/lumus-and-eyesight-deal/.

Atheer AiR Glasses

I couldn’t find much, if any, solid recent information on these glasses. They seem to be following a similar design to the Meta/SpaceGlasses but with far less details and no(?) hands on impressions they are either at an earlier stage of development or being far more stealthy.

Google Glass

Everything’s been said. I could grumble about how the press invented the “glasshole” term long before any of them would have seen a person using one. Let alone a person being rude due to it. But that seems futile at that point. Glass is in development still, people know what it is, and not everything needs to be compared to it.

Oculus Rift

Not AR. But is an electrical device worn on the head, so apparently there is a legal requirement it has to be mentioned with these other devices.

2015

Which brings us to now. Or , rather, the future. Which is now.

The HoloLens

At the time of writing Microsoft just unveiled Its “Hololens” system. Its HMD and, more significantly, AR support being a native feature of Microsoft’s new OS, Windows 10ish.I am not lucky enough to have had a go with the system myself, so I urge people to read some of the many written up online if you have not already.

Hololens hands on.

hands-on-with-hololens-making-the-virtual-real (arstechnica.com)

microsoft-hololens-hands (buisnessinsider.com)

hands-on-with-microsofts-hololens-windows-in-its-most-daring-and-unexpected-form (fastcompany.com)

microsoft-hands-on (wired)

microsoft-hololens-hands-on (engadget)

microsoft-hololens-hands-on-the-promise-and-disappointment-of-ar (pcgamer)

Nonetheless, even not experiencing it first hand, the announcement alone caused me to get goosebumps and for my hair to stand up. That isn’t a metaphor – my excitement over this sort of tech can cause physical reactions in me. I am not sure what selective evolutionary pressure has resulted in me getting goosebumps over some random tech announcement, but never-the less it happens.

It wasn’t long however before my skeptic-sense started tingling.9I was bitten by a radioactive skeptic when younger. Don’t believe me? Then you probably were too

It can’t really be this good already? Microsoft has investment power and skilled coders – but to be this far without any leaks?

And sure, it really wasn’t as good as it looked right now. Of course it wasn’t. But it had little to do with the actual functionality they were showing us.

The real device is bulky, it needs a power cord and it’s uncomfortable. It’s got exposed circuitry.

It’s a prototype in the most prototypiest sense.

But…it doesn’t matter.

Don’t get me wrong, I know these things will each reduce sales a lot if they remain so at launch.

But if there’s one thing I trust tech to do, it’s that it gets smaller and slicker. When has it not?

- and even if its never going to look like the headsets they are showing of, I am sure it could be made significantly better once the finally technical design is pinned down. And from the looks of it, they’re close to that.After all, remember the form factor the SpaceGlass team managed a year ago with a similar device -

despite having a fraction of the resources Microsoft have;

Finally, does looks even matter so much for a home device? I think most of us have some ugly, tapped together, hack-job pieces of tech in our houses (just me?) – its highly unusual for people to have nothing but stylish things in their homes10source: guess. So unlike with portables, Microsoft can get away with a lot here if they need to.

I am perhaps less sure of power consumption. Running a full pc as well as all this tech off a battery with decent life seems dubious, but again, having a device plugged in isn’t such a big deal at home.

No, hololens will succeed as long as they deliver on two things:Microsoft is clearly showing off multitasking of AR apps.

The ability for different apps – presumably able to be made by many different companies – to run at the same time in the same space.

Multitasking is the norm for an OS maker, so this was probably a no-brainer for them. But despite that, this ups the game considerable for AR. It opens up far more possibilities for the end user, and implies strongly that the OS handles occlusion, likely the whole rendering – freeing up considerable work from app developers.

This is the other important thing:

“Tracking — which is to say, “how the headset interprets where your head is in relation to the world around you” — felt the most fully-baked of any of the headset’s sensors. Though the prototype was a bit finicky to move very quickly in, I had no issue turning around quickly or kneeling, or any other movements I tried.”- Ben Gilbert

Microsoft has what sounds like rock-solid tracking. The real and the virtual don’t slip and slide about. This is absolutely critical for AR and yet is very hard to do in real-time fast enough for the human eye.

It was #1 on my list of requirements for composting the real and virtual. And it sounds like Microsoft has it solved. At least, for indoor environments.It also looks like Microsoft might also be solving #2[?][real occlusions]and #4[?][shadows]as well, at least to some extent.While I didn’t get a clear view of the state of this from the impressions, I assume if Microsoft’s positioning is building up a point cloud of the environment, it could use the cloud to approximate occlusions on non-moving objects at least. This would match the concept art mock ups they have done;

I also give Microsoft massive credit for being realistic with these concept art pics; Look carefully – no virtual object is darker than the background color. The darker the object the more transparent it is. The impression of shade against background is done by lighter surrounding halos. This is really excellent attention to detail, as the majority of AR concepts don’t bother with this bit of realism leading to a false impression of what the end product can do.11 To my knowledge no AR system shown in any form, or even in theory, can subtract light from the light coming into the lens selectively. It’s all ‘light additive’ solutions, possibly supplemented by tinting the whole field of view darkerHopefully the end product will use similar illusions to achieve the impression of shade against the background.

Concerns

Despite quite firmly believing Microsoft has a game changer on their hands, I do have a few concerns.

My main one is the “package” Microsoft is selling. They seem to be tying the product intrinsically to both voice control and gesture control which to me seems a very bad idea.

While techniques have improved a lot in recent years, voice control is still far from perfect and certainly not universal in terms of language and accents. For it to be needed in any way, rather than just an interface option, will reduce the systems usability for many people.

Similarly gesture controls have a lot of issues to work out. While it’s a fair bet that pointing and clicking in 3d-space can be done reliably, more complex gestures might become increasingly finicky to detect.Devices like the Leapmotion show potential, but also problems:

- Occlusion of hands preventing detection of any movements beyond the “shadow” they cast.

- It’s simply not comfortable to hold your hands up for extended periods.

My hope is Microsoft codes their system much like Windows has always treated the Windows pointer – allowing a wide range of inputs and the code doesn’t care, provided it gets its x/y co-ordinates and what button is pressed.

In terms of AR that might mean, aside from gestures, a 3d stylus or mouse could be used, which many might prefer for extended periods or precision work.AR is not a naughty word.

I am also perhaps not concerned, but somewhat irritated by Microsoft’s choice of name.

Throughout their documentation, site and presentation they have called what they have made holograms.

But it’s Augmented Reality.

It is in no way a Holograms.

I “get‘ that some marketing team has told them more people understand Holograms, but by using the term they dilute the meaning.

Holograms are wonderful things – truly amazing. For those that don’t know, or are interested in better understand of holography I really recommend this video;

This video explains very well how they are made and work. It’s fascinating.12 I confess this little rant is half an excuse to post this.What Microsoft has is nothing like this. What they have is the digital overlaying of virtual objects on the real world. Augmented Reality.

Microsoft doesn’t need to hide that term. The product sells the term, not the other way around. People don’t need a linguistic explanation – a simple picture tells you what it does.Significant questions

- Microsoft mentioned the light inside the Hololens headset being bounced about thousands of times before releasing the light at the right angle – But how “right” is right? For it truly to be correct means recreating the whole lightfield – so different light coming in at different directions allowing the eye to refocus at near and far virtual objects as if they were real. However, if Microsoft pulled of this incredible feat I think they would be shouting it louder. My guess is they mean letting light out at a extremely long focal length – which would emulate the virtual objects being very very far away. While not perfect, this is still pretty good and should allow visuals that don’t cause eye strain.

- How does it work from a developer standpoint. What bits of the AR do developers have to handle themselves vs what’s taken care of by the OS? If the OS can multitask AR apps how do apps maintain and update their 3d objects?

- How much is Microsoft opening up API and protocol wise? Can 3rd party’s make HMDs conforming to the same standards as HoloLens? Can 3rd parties make alternative input devices for when a finger isn’t precise enough?

- Price. Time frame. Release day apps and games. (aka, the normal stuff)

In short:

HoloLens is significant. This isn’t just part of the tech cycle of “its like this BUT..”13 …has a different screen size!. This is going to be a completely new experience for most people. Its like the first mobile phone. Or even the first time people saw TVs.Yes, there are concerns and it will almost certainly have flaws when released. There is a lot to learn as this is still the start of a new type of computing.

So we aren’t looking at a Telsa Roadster here – we are looking at a Ford Model-T.

But you know that?

That’s absolutely good enough for now.

Potential Hololens Applications

Despite concerns and questions, it seems likely the Hololens will provide great opportunities for application developers.

I would like to offer the following suggestions.14Many of these applications might also be possible on SpaceGlass, which is likely out sooner.

Very simple. Just a notepad app you can stick anywhere on your room. It would be highly surprised if this wasn’t bundled with Windows 10. In fact, depending how its set up, it might just be the standard Windows 10 application that can also be used in 3d-space.

However, there is scope for a developer to make this much more useful by having it network linked so people can update the notes for each other.

The “notes in the air” use-case is probably one of the most common in fiction, and is especially visible in ‘Dennou Coil’.15 Dennou Coil is a 2007 animated series – recommended viewing for people in the industry due to its fairly realistic depictions of a a 2020something future where AR is commonplace.

Generic QR Code to 3D Model linking standard

A QR Code contains the data itself, easily enough for a URL. It does not need a 3rd party to create or associate it with data. An image of a QR Code alone, when viewed with HoloLens or similar could popup the associated 3d model if the right viewing software is active. This would be very simple to do and would save countless magazines and catalogs from needing their own AR apps.

The advantage of having a system to store the data in itself, rather then a 3rd party image look up, can not be overstated. Users will not, generally, bother to download dedicated apps for each catalog or magazine they read, and when the only information you need is a trivially small string (ie, a url) why put extra obstacles in the users path?

Musical Tuition software

If a real 3d-instrument can be recognized and tracked easily it would be fairly easy to make software showing you the correct hand movements to make as you play.

At the very least a piano/keyboard could be handled pretty easily – showing “ghost hands” for you to copy.Virtual Keyboards

Another simple one. Data entry will always be required for a tech no matter what the output form is. How best do you enter data now there’s three dimensions of space to play with? Do we just emulate a existing keyboard? Or will someone come up with the next swype?

3D Modeling Application

Microsoft demoed a casual and accessible 3d program akin to TinkerCad or SketchUp.

But they also showed concepts for more professional uses:And quite rightly too – there is huge professional scope for 3d art packages to work in real space.

As a 3d modeler myself I can attest to how much interface and clicks are devoted to moving the camera. You’re trying to create 3D objects using both a 2D output and a 2D interface – it’s very clearly far from ideal.

Autodesk, and the few remaining competing firms, should be looking very carefully as to how to adapt their software as the potential workflow improvements are immense.

My suspicions are it will be the open source apps like Blender testing the water first though.

Or, theres always an outside chance Microsoft will release a new version of Truespace – the highly advanced networked 3d package they purchased but did nothing with.

For a good example of some interface concepts that might work within such an application I recommend the short film “World Builder”;Some of these concepts, like the controls on the arm, have already been implemented into Leapmotions API. So developers wanting to try out usability of such setups could do so today.

Leapmotions ARM HUDPainting Application

To a lesser extent than 3d, but still useful, is adaptation of 2d art packages.

While Hololens is going to be at quite a lower resolution than working on a monitor, it might be

more pleasant for casual sketches.

One advantage would be being able to arbitrarily move the sketch around at different angles to allow drawing (presumably with a stylus) to more suit the position of the hand. Another would be to have a 3d visualization of the layer stack – almost making painting like working on “cells” of traditional 2d animation.Theres also interesting potential here is for volumetric 3d painting – effectively a completely

new class of application as its only really possible with a true 3d interface. Being able to “paint” in mid-air will be a pretty new experience and the possibilities for new types of art are quite interesting.The RAM requirements for files true volumetric raster files is huge, however.

Generic Board game

Sometimes the most effective ideas are the most simple. While there will surely be many brand-name board game apps released, just like there are on mobile, why not allow a generic one? The idea of casual games playable while chatting to someone might be quite popular, so simply relaying a real board game but with virtual pieces would allow two people to play a quite large selection of games while skype – in another floating window takes care of the face to face.16 See how fantastic it is to have simultaneous AR apps?For example, Chess, Draughts, Go, Backgammon etc. Many games are simple enough that they can be played without needing special “code”. The state of the pieces and how they are moved is the gameplay. Admittedly it might be nicer to have more tangible physical pieces rather than virtual ones, but the convenience of being able to play anyone in the world face to face might be quite attractive to some.Room planner

One that already exists in early forms.

The ability when browsing a catalogue to pull up 3d models and see where they fit in your room would be quite a useful tool for marketers, as well as useful for end users. I would only urge some system that made use of the QR code system mentioned above, rather than expecting the user to download different apps for each company selling products. The less steps needed for the end user, the more likely they are to try it out.Route planner/mapping

While Hololens is designed itself for indoor use, that doesn’t mean it can’t be a asset to other forms of geolocation. Physical maps can be unwieldy and awkward so already many people use Google Maps when looking up where something is.

When planning a more complex trip, having a 3d model of the area you can put waypoints by simply dropping a flag on it might be even more intuitive – especially if the results can be shared seamlessly with others17 My skywritter app had a working demo of this concept; Skywritter video page The idea was people could make waypoints on a web or app based map system which could then be sycned to a friends phones AR app in realtimeThis might be useful when visiting a city for the first time, and planing what sites to visit. Having a too-scale model right in front of you wil give a better sense of scale and layout.Web browser

The big one really. A lot of the above uses could, in theory, be done with webapps. Realistically however that will be a long time coming. At the most basic level a traditional web browser could be “stuck on a wall” pretty easily.

Maybe even letting a tab be pulled from a monitor as reference while you work elsewhere.

However “2d web browser in 3d space” is clearly a rather restrictive and backwards concept.

Eventually we need a open standard for 3d content shared online.My personal preference would be to make use of some existing standards (https, css, javascript) but not html itself. HTML is designed for varying but fixed sized 2d-displays – not an infinite amount of 3d-space. While there is support in modern browsers for 3d translations, the core positioning is all in 2d and would be messy to adapt to 3d. A better course, in my view, would be to develop a new mark up format that uses aspects of html/css but is designed from the ground up for infinite 3d-space. However it is absolutely critical that the new standard is as open as the 2d web was.

Anyone should be able to make content, anyone should be able to run a server and anyone should be able to make a browser that views it.

It was the openness of protocols that got the web to evolve as fast as it did – failing to copy that lesson will result in a far slower uptake and a much poorer experience for the end user.Fridgescanner

Ok, not the most inspired name.

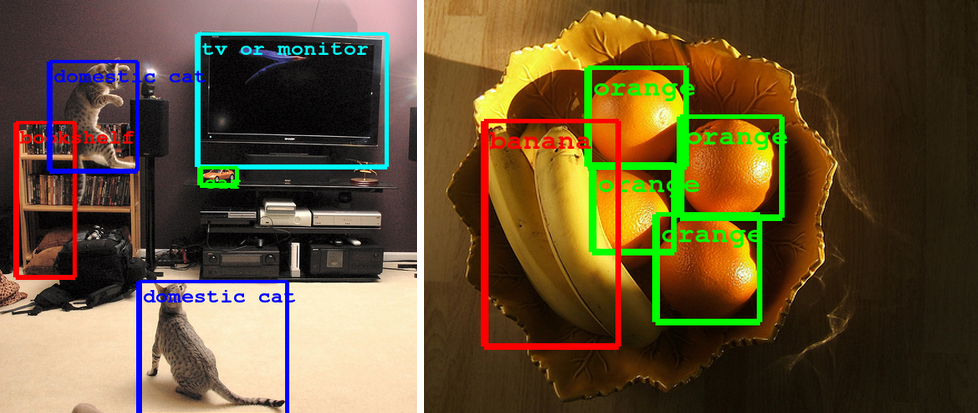

Essentially the idea is by looking at the inside of your fridge or cupboard the device will do optical recognition to recognize as many types of food as it can.

In this way it builds a database of what you have, and how much.

From that it can offer recipe suggestions.

The imagine recognition tech required for this must be quite advanced, but we are getting quite close.

http://googleresearch.blogspot.nl/2014/09/building-deeper-understanding-of-images.html

Point and Control support

Many of us our starting to get various network linked devices into our homes. I have, for example, LIFX light bulbs in my house, that change change their colour or brightness by a wifi to my phone.

Having a protocol to allow the control of these sorts of devices by just pointing at them and doing a relevant gesture would be much slicker than them all needing their own app loaded up each time. It could possibly work by the devices hosting their own webpage with controls that hover in the air next to them. Or, if that’s too complex, some basic JSON format with a handful of input controllers defined in it.…Almost anything

I mean it. How many devices in out house do we have that we don’t really need to physically touch?

Computers are the ultimate emulation device, and with the ability to output pixels to real space the emulation abilities becomes more powerful. Previously computers replaced typewriters, calculators, encyclopaedias, and to some extent, TV, telephones and post.

What new things can they replace the need for now?

What else this year?

I find it quite doubtful Microsoft will actually release Hololens in 2015, and so there’s still time for many others with products nearer completion to grab the limelight.

SpaceGlass mentioned above will be top of the list of interest for me.If they can get the price of the Pro down to a reasonable level (ie, less than $3000) and finish off their work on a portable unit, it could certainly catch developers eyes and might become a attractive product for certain industries and professionals.

Microsoft hasn’t won yet – they have merely set a nice target for everyone else.

Bright future

In 2014 after Facebook bought the Oculus Rift, Zückerberg said he believed that sort of device would sale 1 billion units. Rather confusingly he then followed it up with saying “this sort of immersive augmented reality would become part of our daily lives for billions of people”If he means VR by that then, to be honest, I don’t think it will come close to even a fraction of that. The Rift is a fantastic step forward for virtual reality, and it does indeed have applications outside just gaming. But for most social or practical uses? I feel AR wins out. In general, I don’t think people want to be fully closed off from the world for long periods – and even if they did, it will take many different tech devices to make “billions” of users.19 All iPhone sales combine amount to about 500million. Android sales do total about 1b….but that is a huge range of devices not oneSo, I will make a similar, but I slightly more precise, prediction:

AR HMDs will, within the decade, be as widespread and as used as smartphones today.

There will be a billion AR HMD users – not of any specific model – and none released so far. But the cumulative userbase of direct descendants of the devices mentioned above? Yes. Most certainly.

We will live in a half virtual world.

And that will only be the start

- a I made use of the JCPT 3d lib, which handled much of the 3d work.

- a Specifically; Selective sharing,realtime,federated,completely open – in short, anyone could make a client, anyone could make a server, anyone could host data and they could all talk to eachother

- a Unpaid volunteers continue to work on the protocol under Apache but its been 3 years now and no sign yet of the needed client server protocol standard. In fact, their client and server are still tied together significantly in the code base slowing possible developments for clients. But I am getting side tracked here…

- a (The first iOS AR Apps allowed on the store)

- a The previous Nintendo handheld,has sold 154million units, effectively making it joint-best selling videogame system of all time. In terms of videogames, it’s as mainstream as possible

- a http://www.wired.com/beyond_the_beyond/2014/02/augmented-reality-interoperability-demo

- a http://venturebeat.com/2014/06/18/blippar-buys-augmented-reality-firm-layar/

- a https://www.google.com/atap/projecttango/#project

- a I was bitten by a radioactive skeptic when younger. Don’t believe me? Then you probably were too

- a source- guess

- a To my knowledge no AR system shown in any form, or even in theory, can subtract light from the light coming into the lens selectively. It’s all ‘light additive’ solutions, possibly supplemented by tinting the whole field of view darker.

- a I confess this little rant is half an excuse to post this.

- a …has a different screen size!

- a Many of these applications might also be possible on SpaceGlass, which is likely out sooner.

- a Dennou Coil is a 2007 animated series – recommended viewing for people in the industry due to its fairly realistic depictions of a a 2020something future where AR is commonplace.

- a See how fantastic it is to have simultaneous AR apps?

- a My skywritter app had a working demo of this concept; Skywritter video page The idea was people could make waypoints on a web or app based map system which could then be sycned to a friends phones AR app in realtime

- a https://www.facebook.com/zuck/posts/10101319050523971

- a All iPhone sales combine amount to about 500million. Android sales do total about 1b….but that is a huge range of devices not one

Author thomaswrobel

Categories Augmented Reality, Hardware

← Older Newer →

Comments

There are currently no comments on this article.

Comment